MemCam: Memory-Augmented Camera Control for Consistent Video Generation

Guilin University of Electronic Technology

Demo Videos

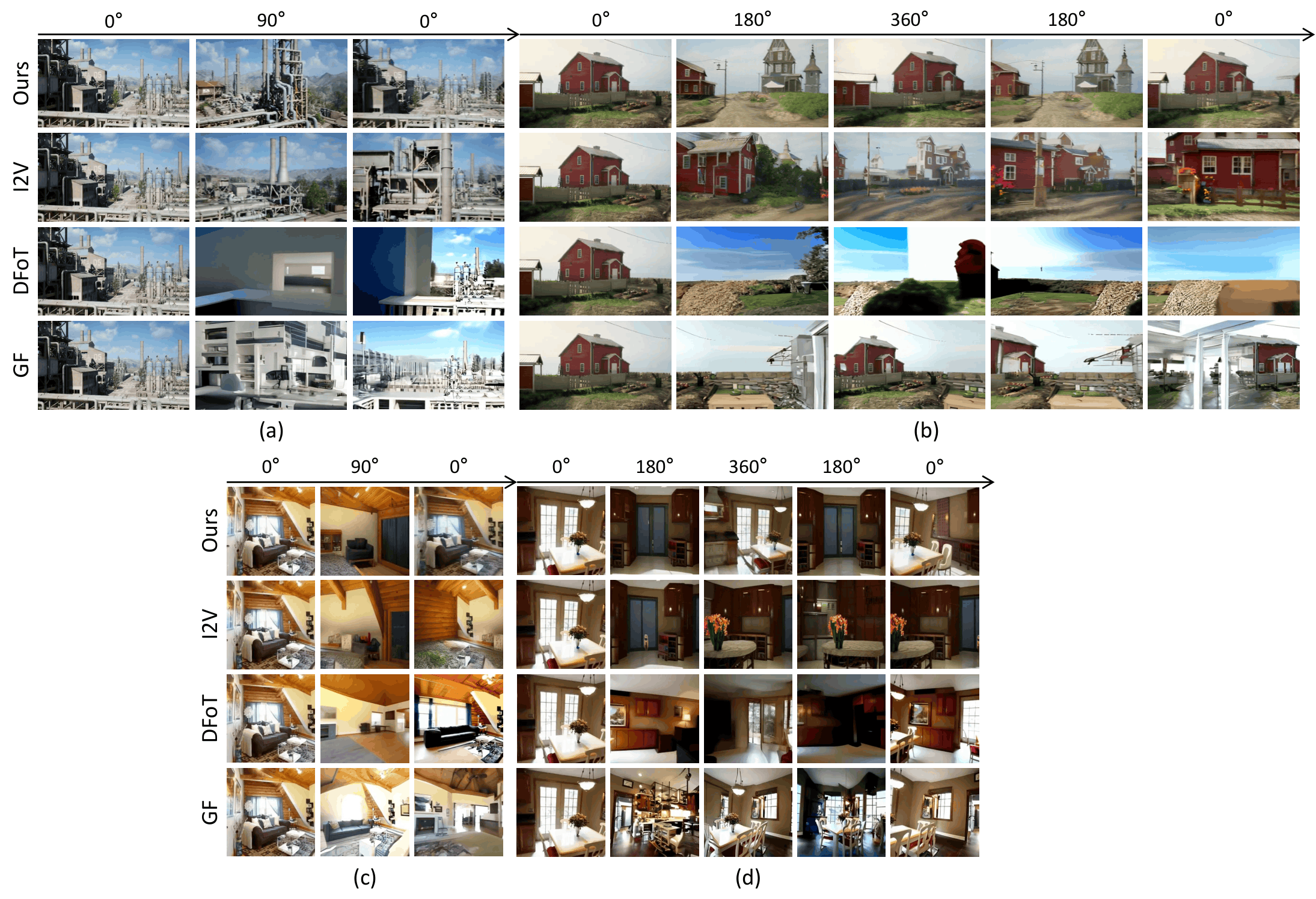

MemCam maintains consistent scene structure even after 360° camera rotations. When the camera returns to the starting viewpoint, the original scene appearance is faithfully reconstructed.

Abstract

Interactive video generation has significant potential for scene simulation and video creation. However, existing methods often struggle with maintaining scene consistency during long video generation under dynamic camera control due to limited contextual information. To address this challenge, we propose MemCam, a memory-augmented interactive video generation approach that treats previously generated frames as external memory and leverages them as contextual conditioning to achieve controllable camera viewpoints with high scene consistency.

To enable longer and more relevant context, we design a context compression module that encodes memory frames into compact representations and employs co-visibility-based selection to dynamically retrieve the most relevant historical frames, thereby reducing computational overhead while enriching contextual information. Experiments show that MemCam significantly outperforms existing baseline methods in terms of scene consistency, particularly in long video scenarios with large camera rotations.

Method

MemCam is built on the Wan2.1 1.3B DiT and introduces two key designs that together enable long-range scene consistency without 3D reconstruction.

Context Compression Module

Encodes historical frames via spatial 2× downsampling, reducing token count to 1/4 and achieving ~5× inference speedup with minimal quality loss.

Co-Visibility Retrieval

Uses Monte Carlo FOV overlap estimation to dynamically select the most viewpoint-relevant historical frames, rather than simply the most recent ones.

Camera Encoder

A single-layer MLP per DiT Block that encodes the 3×4 [R|t] camera matrix and adds it element-wise to the main feature stream.

Segment-wise Inference

Generates video segment by segment; memory is updated after each segment and the highest co-visibility frame is always selected from history.

Results

MemCam achieves the best FVD across all settings. The gains are most significant in the 360° scenario, where longer duration and larger camera rotation pose greater challenges. Bold = best, underline = second best.

| Method | PSNR ↑ | SSIM ↑ | LPIPS ↓ | FVD ↓ |

|---|---|---|---|---|

| Context-as-Memory — 90° Round-trip | ||||

| I2V | 15.81 | 0.452 | 0.470 | 528.51 |

| DFoT | 16.76 | 0.474 | 0.393 | 683.59 |

| GeometryForcing | 16.57 | 0.486 | 0.348 | 557.66 |

| MemCam (Ours) | 17.83 | 0.506 | 0.357 | 215.71 |

| Context-as-Memory — 360° Round-trip | ||||

| I2V | 9.75 | 0.332 | 0.603 | 988.82 |

| DFoT | 8.94 | 0.252 | 0.613 | 1188.34 |

| GeometryForcing | 10.07 | 0.402 | 0.565 | 852.05 |

| MemCam (Ours) | 14.81 | 0.423 | 0.504 | 167.87 |

| RealEstate10K — 90° Round-trip (Zero-shot) | ||||

| I2V | 16.26 | 0.488 | 0.362 | 552.01 |

| DFoT | 17.17 | 0.505 | 0.399 | 539.89 |

| GeometryForcing | 17.70 | 0.597 | 0.316 | 519.78 |

| MemCam (Ours) | 17.61 | 0.544 | 0.314 | 269.82 |

| RealEstate10K — 360° Round-trip (Zero-shot) | ||||

| I2V | 10.16 | 0.284 | 0.611 | 789.62 |

| DFoT | 10.43 | 0.281 | 0.564 | 1002.39 |

| GeometryForcing | 11.19 | 0.379 | 0.405 | 419.60 |

| MemCam (Ours) | 16.52 | 0.550 | 0.400 | 131.96 |

Citation

title = {MemCam: Memory-Augmented Camera Control for Consistent Video Generation},

author = {Gao, Xinhang and Guan, Junlin and Luo, Shuhan and Li, Wenzhuo and Tan, Guanghuan and Wang, Jiacheng},

booktitle = {International Joint Conference on Neural Networks (IJCNN)},

year = {2026}

}